4 min read

Why Observability Matters for Agentic Systems

Linda Vu Nguyen

The shift from deterministic to autonomous

Traditional software is predictable. A request comes in, code executes, a response goes out. Given the same input, you get the same output. Monitoring this kind of software is well understood: track requests, measure latency, alert on errors.

AI agents work differently. An agent receives a goal and decides how to achieve it. It selects tools, accesses data, chains actions together, and produces an outcome. The same goal can lead to different tool calls, different data access patterns, and different results on every run. The behavior isn’t defined in advance. It emerges at runtime.

This is a fundamental shift, and it changes what observability needs to do.

Why traditional monitoring falls short

When software is deterministic, monitoring answers straightforward questions. Did the request succeed? How long did it take? Where did the error occur?

When software is autonomous, the questions change. What did the agent do? Why did it make that choice? What data did it access along the way? Was any of that behavior outside the bounds of what it should have done?

Traditional monitoring can tell you that an agent run completed and how long it took. It can’t tell you what happened inside that run. The chain of decisions, tool calls, data access, and execution that connects a goal to an outcome is invisible.

This is what we call the Agentic Execution Gap: the space between an agent’s decision and its outcome where existing tools have no visibility.

What agentic observability looks like

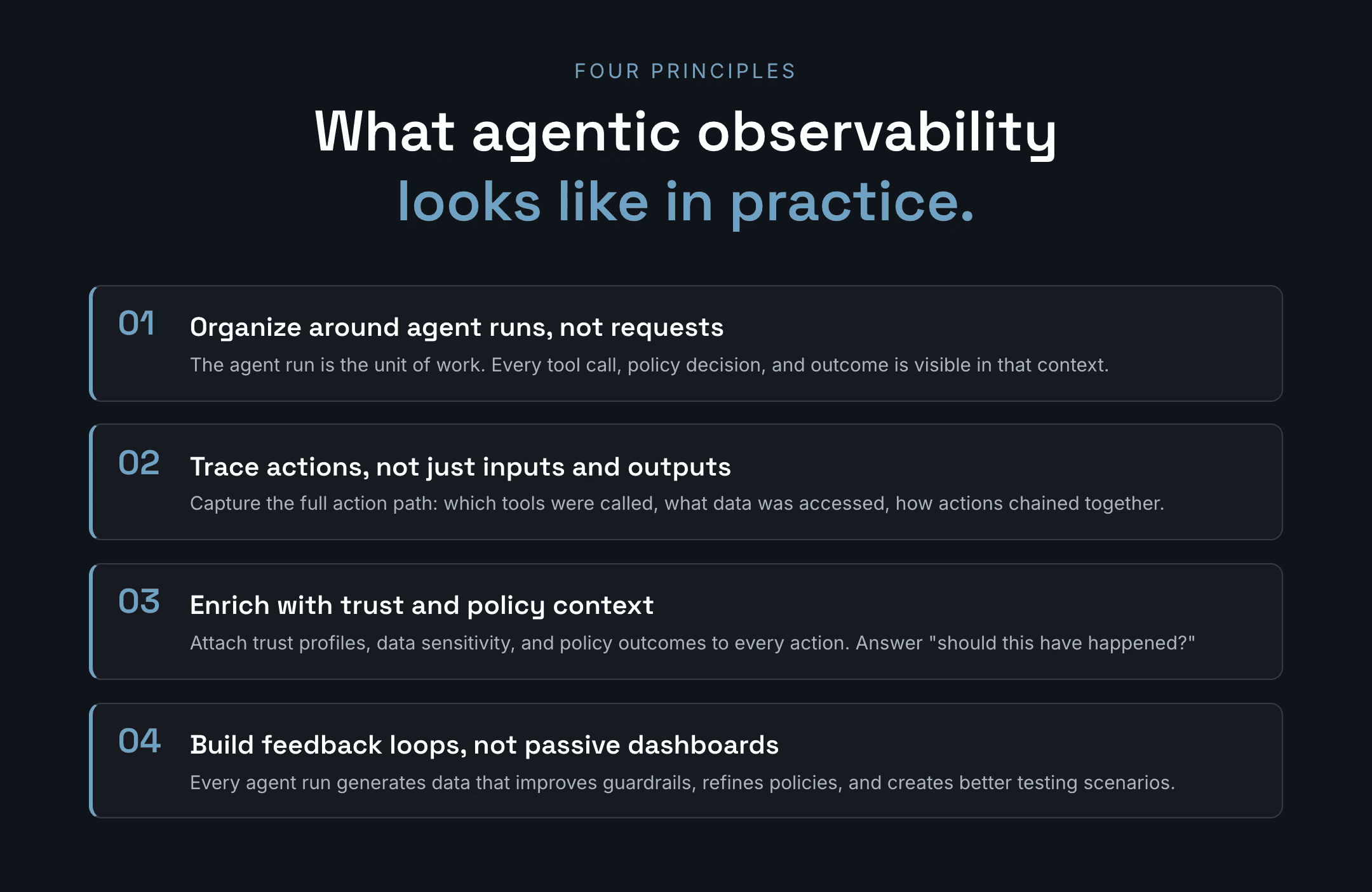

Closing the Agentic Execution Gap requires observability built around how agents actually work. Four principles define what that means in practice.

Organize around agent runs, not requests. Traditional monitoring follows requests through a call stack. Agentic observability follows an agent through its decisions: which tools it selected, what services it called, what policies shaped its behavior, and what happened as a result. The agent run is the organizing unit, not the HTTP request.

Trace actions, not just inputs and outputs. Knowing what an agent was asked to do and what it returned is not enough. The critical information lives in between: which tools were called, what data was accessed, what code was executed, and how those actions chained together. Observability needs to capture the full action path from decision to outcome.

Enrich traces with trust and policy context. Raw execution data shows what happened. But the question teams actually need answered is: should this have happened? That means attaching context to every action. What is the trust profile of the tool that was called? What is the sensitivity of the data that was accessed? Did a policy evaluate this action, and what was the result?

Build feedback loops, not passive dashboards. Observability for agents isn’t something you check when an incident happens. It’s a continuous loop. Every agent run generates data that can improve guardrails, refine policies, and create better testing scenarios. The system should get smarter as you operate it.

Who needs it and why

Different teams come to agentic observability with different needs, but they all start from the same problem: they can’t see what agents actually do.

Developers and builders need to debug non-deterministic behavior. When an agent produces an unexpected result, they need to trace the full chain of actions that led there, not reconstruct it from scattered logs. They also need safe environments to test new tools and models before rolling them out.

Security and risk teams need audit trails that show what an agent did, what data it touched, and whether controls were enforced. They need to validate that guardrails work in practice, not just in theory. And they need to catch patterns like unauthorized data access or tool misuse before they become incidents.

Operations and platform teams need to monitor the health and reliability of AI features at scale. That means detecting regressions when models or tools change, understanding which agent behaviors are normal and which are drift, and making improvements based on real production patterns.

The three questions

Agentic observability, at its core, exists to answer three questions:

What did the agent do? A complete, traceable record of every action: tool calls, data access, execution steps, and outcomes.

Why did it do it? Context about what shaped the agent’s behavior: the model’s reasoning, the tools available, the policies in effect, and the data it encountered.

Should it be allowed to do it again? A judgment layer that evaluates each action against trust profiles, data sensitivity, and organizational policies, and feeds the result back into the system.

When teams can answer these three questions for every agent run, they can debug faster, govern with confidence, and build trust in systems that make their own decisions.

That’s what observability looks like when it’s built for agents.

See for yourself and test out BlueRock Observability Sandbox via our managed PaaS.