7 min read

The Gap in Agentic AI: Why We Built the Trust Context Engine

Bob Tinker

Co-Founder & Former CEO, BlueRock Board Member

BlueRock CEO Bob Tinker on the four patterns he keeps seeing as teams move agents from experiments to production — and why context, not more logs, is what's actually missing.

Introduction

Agentic systems are exciting because they unlock a completely new way to build software. Instead of hard-coding every path, we can now build systems that reason, choose tools, and take action dynamically in the real world.

That’s the opportunity — and it’s a big one.

But it also changes the game.

When agentic software starts deciding what to do at runtime, the old ways of understanding and controlling behavior stop working. It’s no longer enough to inspect code, review integrations, or assume execution will follow a predefined path. Agents behave based on the context around them, and that means teams need a very different way to understand what is actually happening once execution begins.

That’s what we kept seeing again and again: teams moving fast, building impressive things, but struggling to answer the most important question once agents started taking real action:

What exactly is this system doing — and can we control it with confidence?

At BlueRock, we believe control starts with understanding. And understanding starts with context.

That’s why we built the Trust Context Engine.

Control starts with understanding. And understanding starts with context."

The Missing Layer: Context Around Agentic Execution

Most of the AI landscape today focuses on what goes into the model — prompts, retrieval, memory, instructions. That’s important. But it’s not where production agents cause real damage.

The real challenge starts after the model makes a decision. An agent selects a tool. It calls an MCP server. It exercises a capability. Something changes downstream in runtime.

That’s where real impact happens — and where most teams lose clarity.

They can see that something ran. They might even trace parts of it. But they can’t confidently answer:

What exactly happened?

What did the agent connect to?

Was that component appropriate?

What actually happened downstream?

This is the missing layer.

What the Trust Context Engine Does

The Trust Context Engine solves this by attaching Trust Context to execution.

Not prompt context. Not model inputs.

Execution.

At each step of the Agentic Action Path, BlueRock captures and attaches:

what component is involved

what capability is being used

who owns it

how it’s classified

how it behaves in practice

what happens next

This combines two things teams have never had in one place:

what is known about a component before execution

and what is observed as that component is actually used in runtime

That combination changes everything.

Instead of reconstructing behavior from logs, teams see execution as it actually unfolds. Instead of debating what should be trusted, they can evaluate what is happening in practice. Instead of slowing down to stay safe, they can move faster with better information.

Control shifts from static rules to informed decisions.

What I’m Seeing Across Customers

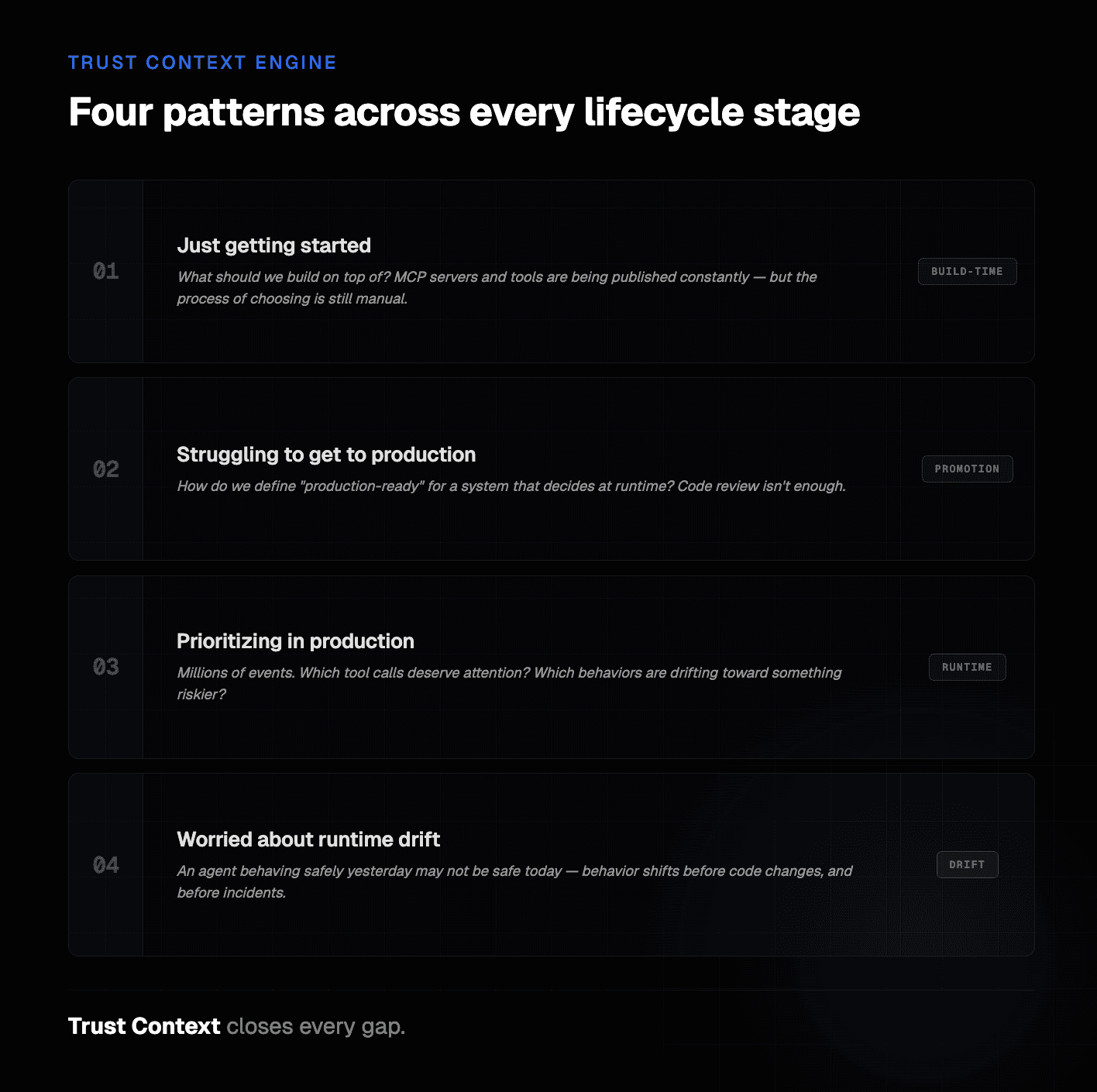

This pattern shows up at every stage of the customer lifecycle.

Just getting started

One of the earliest signs that a team is getting serious about agentic systems is that they stop asking, “Can we build this?” and start asking, “What should we build on top of?”

That sounds simple, but in practice it is becoming a real problem.

New MCP private and public servers and tools are being published constantly. Developers want to move quickly, try things, and connect capabilities that unlock value. But the process of deciding which MCPs and tools are actually worth using is still surprisingly manual.

I’ve heard versions of the same frustration repeatedly: What’s the best way to assess an MCP? How do we know whether one server is better than another? How do we avoid guessing our way into risk? How do we understand the potential damage from tools?

That’s not a governance problem in the abstract. It’s a build-time problem.

When teams are just getting started, they need a more systematic way to evaluate what they are building with, not after the fact, but before those choices get wired into real workflows. They want a way to consume that intelligence directly in the places decisions already get made — in developer workflows, internal tooling, and CI/CD — so they can make better decisions faster instead of relying on tribal knowledge, ad hoc reviews, or whoever happens to be loudest in the room.

That is one of the first places Trust Context becomes valuable. It gives teams a better input layer for deciding what to connect to before those decisions become consequential.

Safe to deploy is no longer something you can infer just from code review or configuration.

Struggling To Prod

The next pattern shows up when teams have already built something promising and are trying to move it from experimentation into production. This is where a lot of agentic initiatives stall.

On the surface, the workflow works. The agent is taking useful action. But under the hood, the team often struggles to keep track of all the tools, MCP servers, and dependencies that are actually involved. And as soon as the workflow starts moving from “read” toward “write,” teams again struggle to define, evaluate, and automate what should qualify as production-ready.

This is where the conversation gets sharper.

Teams start asking: How do we assess every tool and MCP this workflow depends on? How do we automate that decision in CI/CD? How do we know what should be promoted — and what should not?

That is not just a visibility problem. It is a decisioning and enforcement problem.

And it’s one of the clearest reasons we built the Trust Context Engine the way we did.

What teams want here is not another manual review process. They want automated assessment. They want to consume trust context that can actually feed the workflows they already use to make promotion decisions. They want a way to evaluate the trust posture of what their agents are touching and make that actionable in the software delivery lifecycle.

That’s a big shift.

Because once agents are taking actions dynamically, “safe to deploy” is no longer something you can infer just from code review or configuration. It has to be informed by what the workflow is actually doing in practice.

That’s exactly where Trust Context fits.

Prioritizing in Prod

The third pattern shows up once teams are already live.

At that point, the challenge is no longer “Can we build this?” or even “Can we ship this?” It becomes: Can we operate this without getting buried?

Agentic workflows generate an enormous amount of activity. Millions of events are not unusual. The problem is that events are not equally important — but most teams do not yet have a good way to prioritize the meaningful ones from the noise.

That creates a dangerous operating environment.

Teams are flooded with signals, but still struggle to answer the questions that matter most:

Which tool calls deserve attention?

Which runtime behaviors are normal?

Which actions are drifting into something riskier?

What should we actually prioritize right now?

This is where Trust Context becomes operationally critical.

Without it, teams are just looking at volume. With it, they can start to prioritize based on what actually matters — which capabilities were invoked, what kind of action occurred, what downstream effect it had, and whether the behavior is consistent with what they expected.

Worried about Agentic Runtime Drift

Agents are non-deterministic by design. That is part of what makes them useful. But it also means behavior can drift over time in ways that are hard to catch early if all you are watching is raw activity. An agent that was behaving safely yesterday may start taking riskier runtime actions tomorrow because its decision path has shifted, its dependencies changed, or its environment evolved.

That is not a theoretical concern. It is exactly the kind of issue teams need to catch before it becomes a production incident.

Trust Context gives teams a way to do that.

If an agent begins behaving outside expected patterns, that change shows up in the Trust Context around its execution. Teams can flag it for review. They can tighten boundaries. They can sandbox the agent before the blast radius expands. That is a very different operational posture than waiting for something to break and then trying to reconstruct what happened afterward.

And I think that is where the market is headed next.

Not just more agent activity.

Not just more automation.

But a much stronger need to understand which behaviors matter — and act on them in time.

Why This Matters

Agentic systems are moving fast:

from read-only → real actions

from isolated → connected environments

from experiments → production workflows

As that happens, the problem changes. It’s no longer:

“Can we build this?”

It’s:

“Can we understand and control what happens when it’s running? Can we automate to prod?”

That requires a new layer — one that travels with execution.

That’s what Trust Context provides.

Closing

We built the Trust Context Engine because we kept seeing the same thing:

Teams are ready to build and run agents. What they’re missing is the context to make good decisions quickly.

That gap shows up when developers choose what to build with.

It shows up when teams decide what to promote.

It shows up when workflows hit production and no one can clearly explain what happened.

Trust Context closes that gap.

It gives teams the ability to understand execution, make better decisions, and move faster without losing control.

And in our view, that’s what the next generation of agentic infrastructure requires. Learn more details on our new website here.

Let’s build.

FAQ

What is the Trust Context Engine and what problem does it solve?

The Trust Context Engine provides the identity, capability, and trust intelligence that agentic systems need to operate safely in production. Because agents generate behavior dynamically at runtime — selecting tools, invoking MCP servers, and triggering downstream actions — the context needed to understand and govern that behavior rarely travels with execution. The Trust Context Engine continuously enriches the Agentic Action Path with that missing context.

How does the Trust Context Engine differ from standard logging or tracing?

Logs and traces show that actions occurred — but they don't capture which agent initiated them, what capabilities were invoked, or how execution propagated across systems. The Trust Context Engine attaches structured identifiers, capability metadata, and trust signals to every step of execution, giving teams the context needed to interpret and govern behavior — not just observe it.

Where does the trust intelligence come from?

The Trust Context Engine is continuously enriched by the MCP Trust Registry, which provides structured trust intelligence about MCP servers and tools across the ecosystem — including ownership verification, capability exposure, and operational posture. This allows organizations to evaluate which systems agents can safely interact with before and during execution.

What other BlueRock capabilities does the Trust Context Engine power?

It serves as the foundational context layer for both Observability and Guardrails. Observability uses trust context to surface the agents, tools, and interactions that actually shaped execution — rather than requiring teams to sort through raw telemetry. Guardrails use the same context to make precise, real-time enforcement decisions based on agent identity, tool capabilities, and MCP server trust posture.