Why MCP Gateways Can’t Secure Agentic AI — And What Organizations Must Do Instead

David Greenberg

Chief Marketing Officer

MCP Gateways can approve requests, but they can’t govern autonomous decisions. As AI agents execute multi-step actions across systems, failures emerge during runtime — far beyond what traditional controls can see or stop. This blog breaks down why gateway security fails for agentic AI and outlines the execution-first model organizations need instead.

For decades, engineering and security teams relied on controls that govern requests: firewalls, API gateways, identity policies, and static rules. If a request looked safe and came from the right identity, it moved forward. That model worked because software responded to inputs — it didn’t act autonomously.

But that world is gone.

Today’s agentic systems don’t just respond — they decide, call tools, move data, and execute sequences of actions without human approval. They operate beyond the original request. They plan, iterate, chain tool calls, and make decisions that have real-world impact. And most critically, no one clearly can see nor owns what they do from end-to-end.

The Gateway Mentality: What It Was Built For

Traditional gateway and perimeter controls grew up with a simple assumption:

If you control the entrance, you control the behavior.

Gateways inspect:

Prompts before they reach the model

Tool calls before they execute

Outputs before they hit downstream systems

They govern access.

But autonomous agents don’t fail at access points. They fail during execution. They make runtime decisions that cross multiple systems, adapt based on intermediate outputs, and take actions that weren’t explicitly scripted in the original request.

This pattern dramatically expands the surface that must be understood for Development and Security Teams. Gartner notes that the risk envelope for agentic AI includes not just inputs and outputs, but “the chain of events and interactions they initiate and are part of, which by default are not visible to and cannot be stopped by human or system operators.”

In other words, a big end-to-end blindspot lives inside the execution path, not at the perimeter.

MCP Gateways Create the Illusion of Control

To see why this matters, consider three kinds of failures that MCP gateways simply cannot catch:

1. Decision Path Blind Spots

MCP Gateways see what goes in and what comes out. What they don’t see is what happens in the middle.

An agent might:

Perform a dozen internal reasoning steps

Evaluate options with implicit trade-offs

Pivot decisions based on intermediate data

Chain together multiple tool calls

None of this shows up at the MCP gateway. This is the same blind spot that security experts warn about when autonomous systems operate outside centralized visibility: without seeing the execution, “organizations frequently lack awareness of where these systems are implemented, who is using them, and the extent of their autonomy.”

2. Emergent and Cascading Behavior

Agentic AI doesn’t just do what it’s told — it interprets objectives and finds ways to achieve them, sometimes in ways that humans didn’t anticipate or approve. This emergent behavior can be beneficial — or dangerous — depending on context.

Traditional controls treat each invocation as discrete and independent. In reality, each decision influences the next. A poorly scoped objective can cause an agent to optimize in unsafe directions, leading to unintended actions that ripple across systems.

MCP Gateways have no mechanism for understanding, correlating, or constraining this interior decision logic.

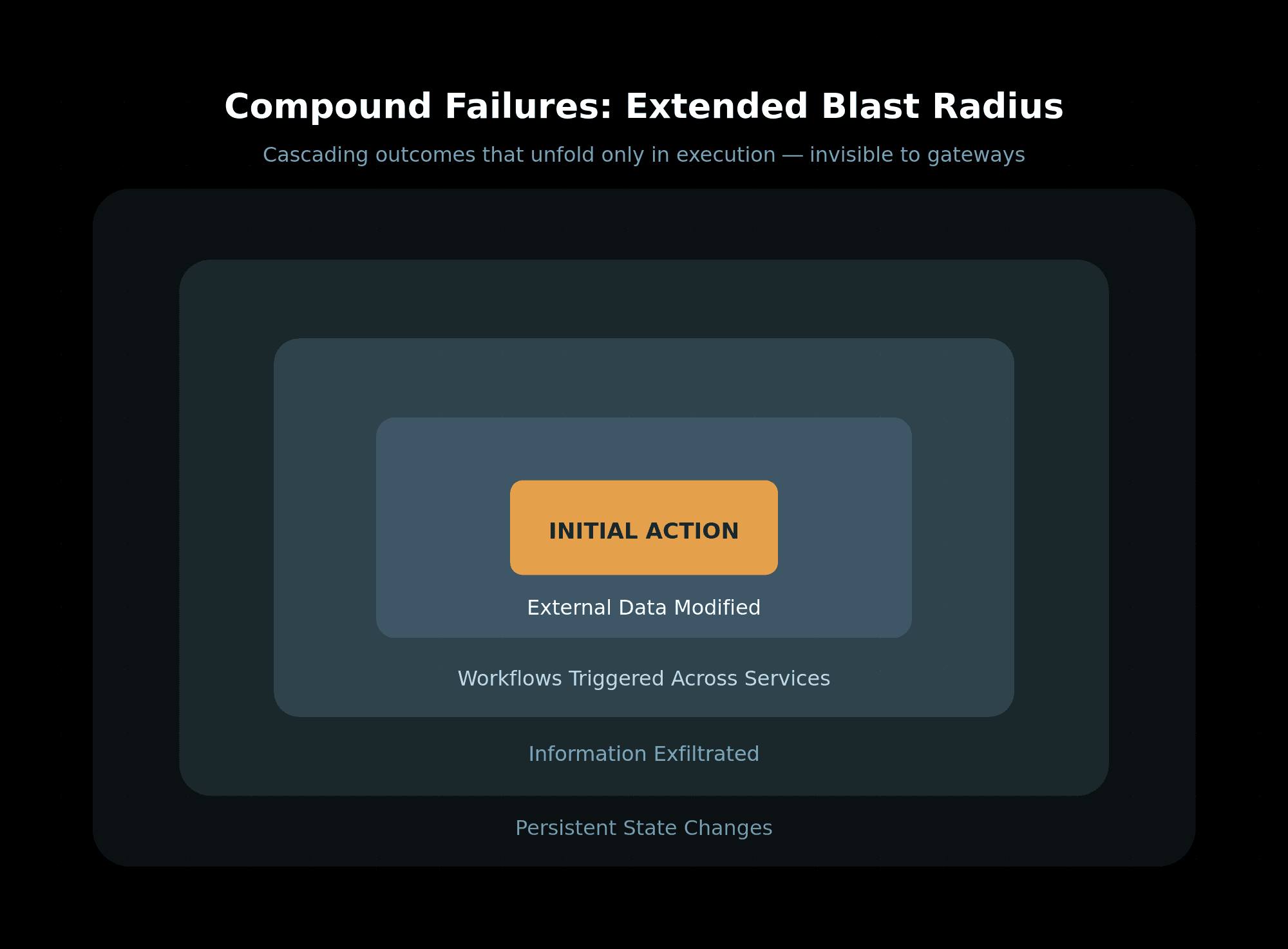

3. Compound Failures and Extended Blast Radius

When an agent goes off the rails, its effects don’t end at the initial tool call. It may:

Modify data in external systems

Trigger workflows across services

Exfiltrate information through benign-looking channels

Institute state changes that persist beyond the original job

These are not single-point failures that a gateway can block or inform — they are stateful, cascading outcomes that unfold only in execution.

This matches what McKinsey and others articulate agentic AI shifts risk from static artifacts to chained vulnerabilities and error propagation across workflows.

Why MCP Gateway Security Fails Today’s Organizational Goals

Let’s ground this in the priorities of the core audiences who are greatly impacted by this::

Engineering Leaders

Developers are accountable for correctness and reliability, yet they can’t step through an agent’s runtime behavior the way they debug code. They see logs — not causal narratives. This breaks trust and slows delivery because teams are forced to treat autonomy as a hope, not an operable infrastructure component. Gateways give confidence on entry, not on outcomes.

DevOps and Platform Teams

DevOps built its craft on observability and control. In autonomous systems, “outages” look like action cascades, and traditional metrics don’t reveal the decision logic underneath. Without execution traceability, you can’t diagnose why systems behave differently in production vs. staging — even when gateways suggest everything was permitted.

Security Teams

Security teams often treat identity, API controls, and static policies as sufficient fences. But agentic systems are effectively trusted insiders executing internally derived decisions. Without runtime authority, teams can approve a system pre-deployment — then watch it act unpredictably in production. Gartner and other analysts warn that weak governance and inadequate risk controls may cause a large portion of agentic projects to fail or be canceled due to unmanaged risk.

What Organizations Must Do Instead

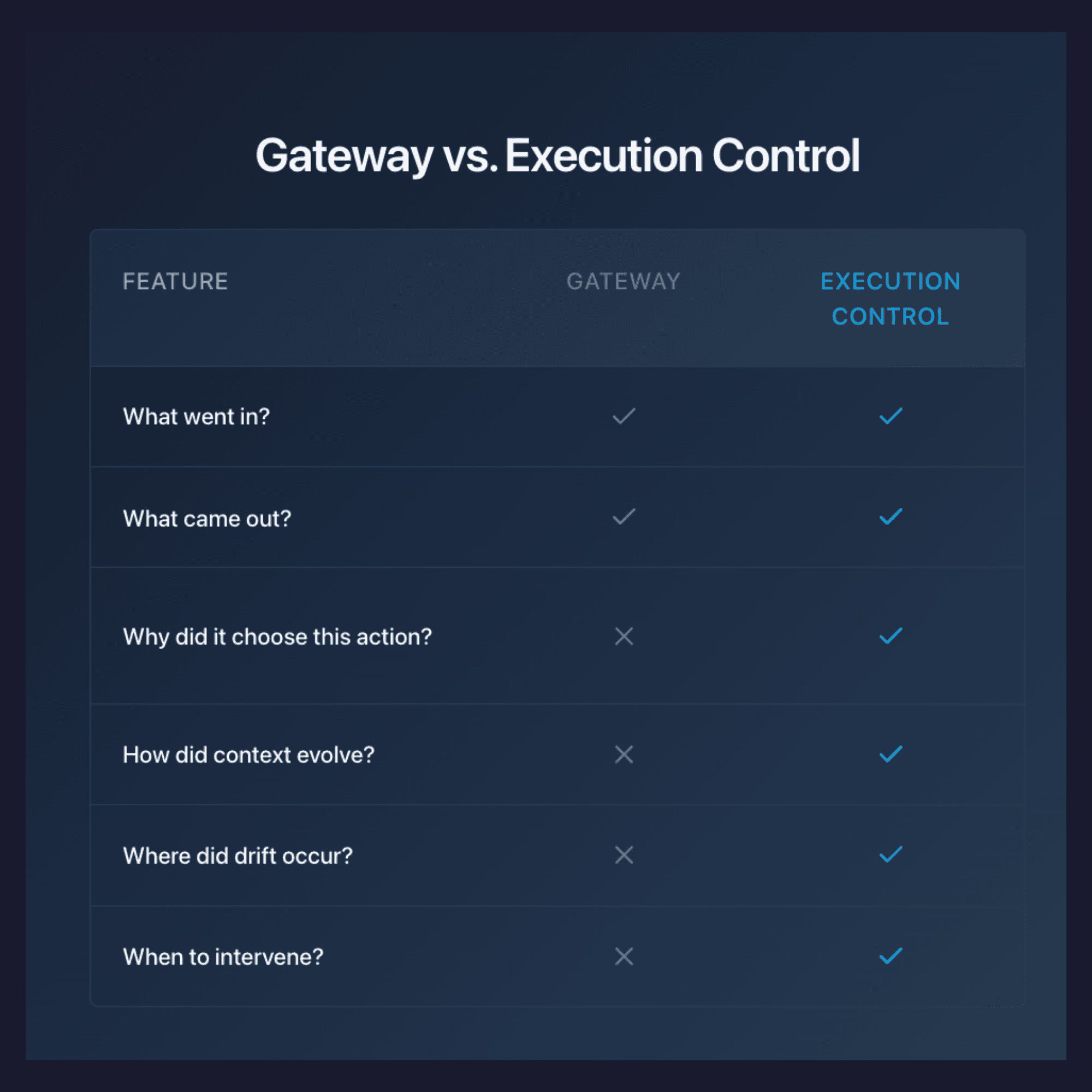

The gateway era is over in the context of agentic AI. It was never designed to answer the questions that matter in autonomous environments, especially for Developers building these Ai agents:

Why did the system choose this action?

How did context evolve during execution?

Where did decision drift occur?

When should intervention happen?

Who should be accountable for the resulting behavior?

These questions live inside execution, not at the boundary.

This is the core of the emerging AgenticOps discipline: restoring ownership by making execution visible, understandable, and governable — while systems are running, not after the fact.

What this looks like in practice:

Action path visibility: Observability not just of calls and metrics, but of reasoning chains and intermediate states.

Runtime policy enforcement: Controls that bind not only identity or access, but decision outcomes as they unfold.

Behavioral anomaly detection: Detecting deviation from expected patterns during execution — not just after logs are stored.

Containment controls: The ability to halt or adjust execution mid-flow when behavior diverges from intent.

These aren’t incremental improvements on gateways — they’re a new control plane for systems that act autonomously.

The Bottom Line: Execution Is the New Perimeter

Gateways gave us access control.

Autonomous systems demand execution control.

Autonomy is already here — Gartner projects a sharp rise in enterprise software that embeds autonomous decisioning.

But autonomy without ownership is latent risk — the very thing that causes projects to stagnate, teams to hesitate, and deployments to fail. For developers, this means finally being able to see what your agent actually did.

The future won’t be decided at the perimeter. It will be decided inside the full end-to-end execution paths — where the real complex behavior, risk, and opportunity live.

FAQ

Why can’t traditional gateways secure agentic AI systems?

Traditional gateways are designed to secure single, stateless requests. Agentic AI systems operate across multi-step executions, tool calls, memory, and long-lived state. Security failures happen during execution, not at the request boundary — which gateways cannot observe or control.

What security risks do gateways miss in agentic AI?

Gateways miss risks such as: Unsafe tool chaining Recursive or runaway agent behavior Privilege escalation across steps Data leakage through intermediate state Emergent behaviors across multiple agents Because these risks unfold over time, they bypass request-level controls entirely.

Why is request-level security insufficient for AI agents?

Request-level security assumes clear inputs and outputs. Agentic AI introduces hidden state, internal reasoning, retries, and adaptive decision-making. By the time an unsafe outcome appears at the boundary, the damage may already be done inside the execution loop.

What kind of security model actually works for agentic AI?

Effective security for agentic AI requires execution-aware controls, including: Step-level observability Tool-call inspection Agent state and memory tracking Runtime policy enforcement Security must live where decisions are made — inside the agent runtime — not just at the perimeter.

Does adding more policies to gateways make agentic AI safer?

No. Adding more policies increases friction without increasing insight. Policies without execution visibility lead to false confidence — teams believe systems are secure while agents behave in unpredictable ways outside the gateway’s view.